The Setup

app.get('/user/:id', async (req, res) => {

const user = await db.query('SELECT * FROM users WHERE id = 42', [req.params.id]);

res.json(user);

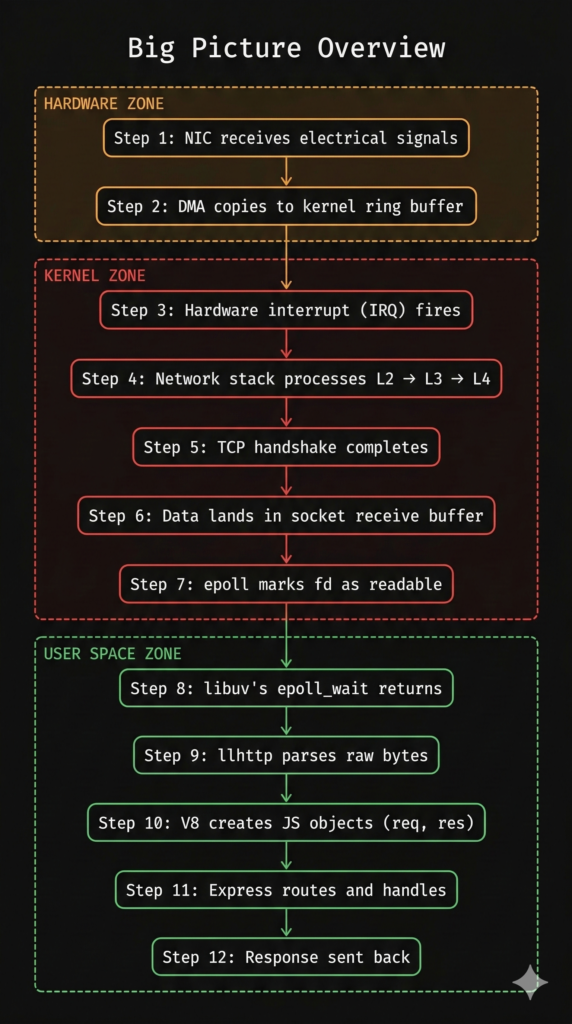

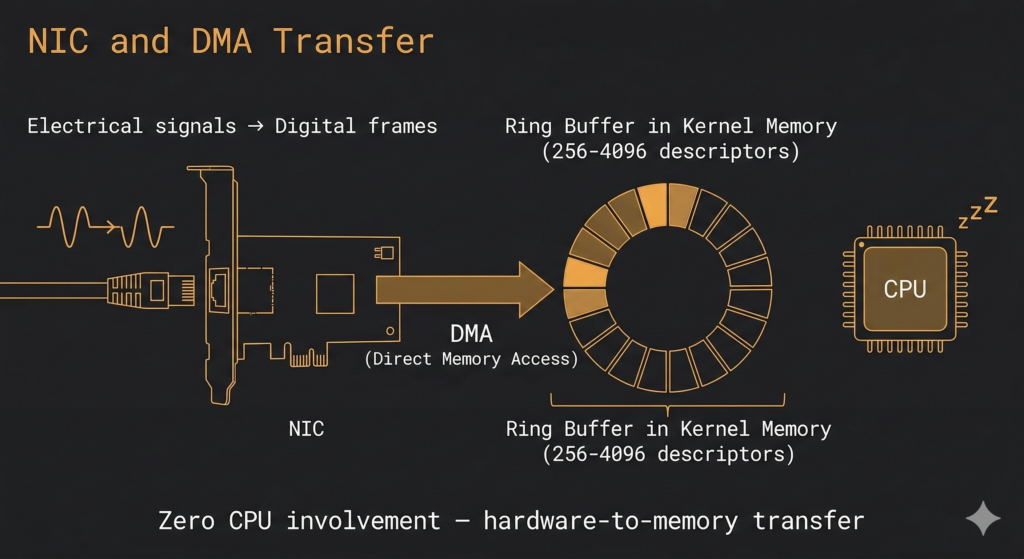

});Lets say a client sends GET /user/42 to a node.js server hosted somewhere. The request travels as electrical signals or light pulses through cables and reaches the network interface card (NIC) of the server. NIC uses direct memory access (DMA) to copy the data to a ring buffer in memory. Then the NIC sends a hardware interrupt (IRQ) to the CPU. Instead of interrupts there can be polling mode also where the kernel keeps checking whenever it is free. Sometimes this is preferable instead of being interrupted when it is busy doing something.

Now the kernel stack starts processing the request layer by layer. Basically, it checks if the request is really for this machine, does the firewall allow this request to proceed etc. TCP layer matches the source IP, source port, destination IP, destination port and copies the payload (HTTP request bytes) into the sockets receive buffer (sk_buff). The kernel then marks this file descriptor (fd) as readable.

This is like a customer walking into a restaurant with his reservation envelope in his hand. The host at the podium checks their reservation – verifying their identity (MAC), checking they are at the right restaurant (IP), confirming their booking details (port). In this case let’s say everything checks out.

The host assigns them Table 42, seats them, writes a note on the clipboard “Table 42 – Ready to order”

Libuv gets notified – The waiter checks the clipboard.

When we start a server with server.listen(3000), an event loop is setup which keeps running through phases.

timers -> pending callbacks -> idle/prepare -> poll -> check -> close

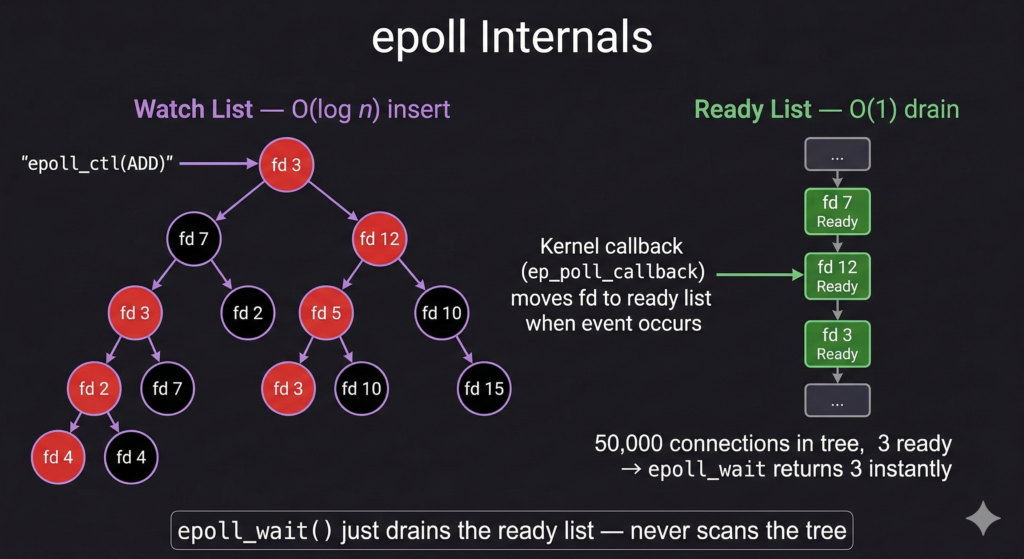

During the poll phase, libuv calls epoll_wait(). If nothing is ready, the thread goes to sleep. Before this request, the process is completely idle. No activity whatsoever.

Now, the packet has arrived and the kernel has marked the fd=42 as readable in the epoll’s ready list. epoll_wait() returns telling libuv “File descriptor 42 has data”. Libuv calls read() on the socket and pulls the HTTP bytes.

The kitchen is sleeping(or chit-chatting) as there has been no pending order. Our waiter has being walking in a loop checking his watch, handling any pending tasks, glancing at the clipboard. This time the clipboard has a new entry “Table 42 – Ready to order”

The waiter grabs the note and heads toward Table 42.

The HTTP Parser Processes Raw Bytes – The waiter listens to the order with extreme precision.

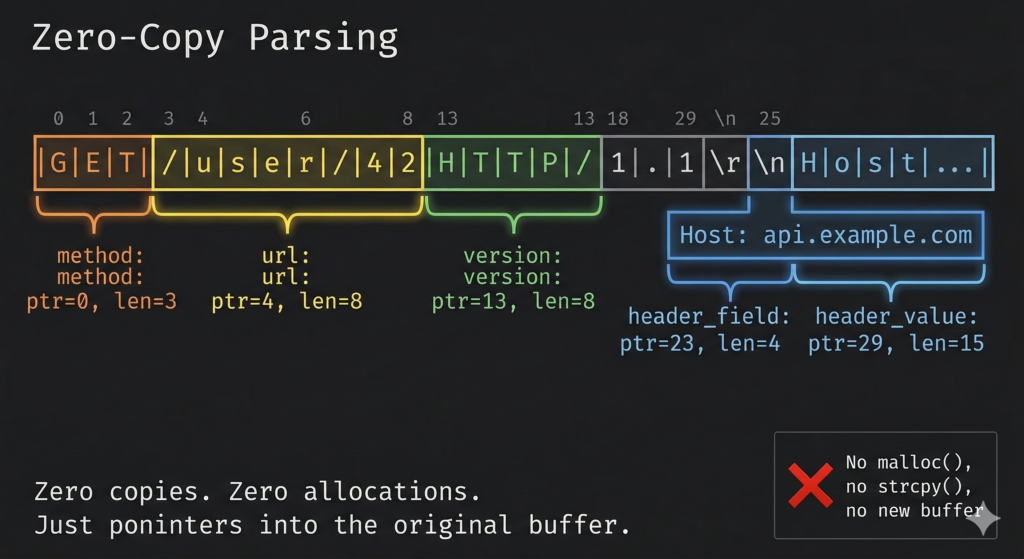

Libuv reads the raw bytes of the request which can look something like:

GET /user/42 HTTP/1.1\r\nHost: api.example.com\r\nAccept: application/json\r\n\r\n

These bytes are fed to llhttp which is dynamically generated by llparse. As it completes each section, it fires callbacks

- “GET” -> on_method_complete

- “/user/42” -> on_url_complete

- (“Host”, “api.example.com”) -> on_header_field + on_header_value

- finally -> on_headers_complete

- Common headers like

host,content-type,acceptuse pre-created V8 string handles — no allocation per request for these frequent headers.

Meanwhile our waiter arrives at Table 42. The customer starts giving the order and the waiter listens with surgical precision and writes down in a structured notepad.

- Dish : GET

- Table : /user/42

- Special Instructions : Host: api.example.com

No paraphrasing, no interpreting. Just writing down as is.

The Request Event Fires – The waiter hands the order to the floor manager.

The C++ binding now calls JS via V8’s MakeCallBack(). This creates two JS objects

- IncomingMessage (req) – A readable stream

- ServerResponse (res) – A writable stream

Then server.emit(‘request’, req, res) is a for-loop over listeners. app.listen(3000) is the listener. Since this is synchronous code, no other JS code can run at this point.

Now the waiter walks from the Table 42 to the floor manager (Express) and hands over two things

- The order details slip (req) – Everything the customer said

- An empty serving tray (res) – Ready to be loaded with the dishes (response)

Until the floor manager reads and gives a “ok I’ve got this” , waiter is waiting there. He is not taking any further orders or doing anything.

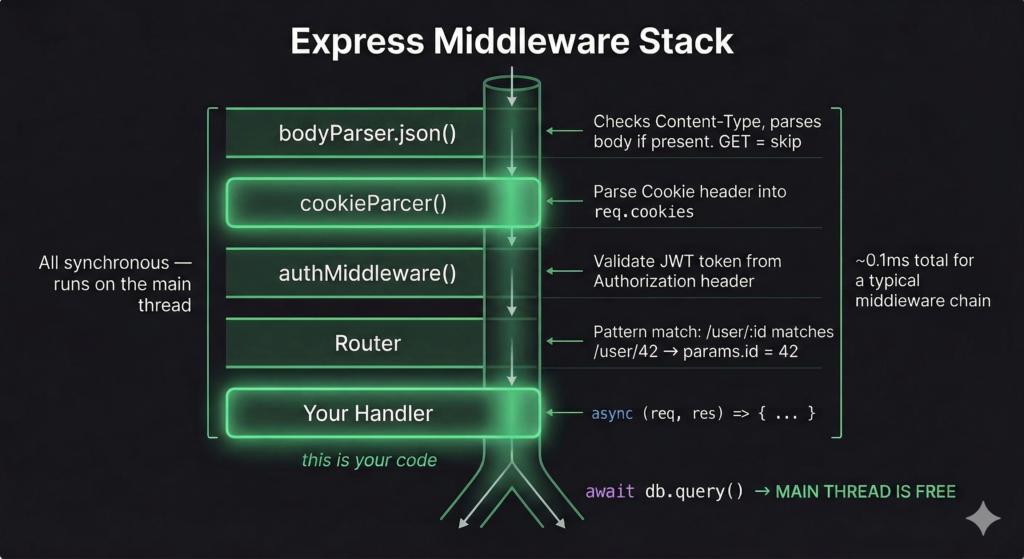

Express Routes the Request – The order passes through the prep stations.

The express router walks through the layers

- CORS middleware (route=null, matches any method): Sets Access-Control-Allow-Origin headers. For normal requests, sets headers and calls next().

- Body parser (route=null): Checks typeis.HasBody(req) first. Our GET request has no body. For POST requests, it would buffer chunks and parse on end.

- Auth middleware (route=null): Runs jwt.verify(). This is synchronous work. Set req.user. If auth fails doesn’t call next() hence stopping the chain.

- Route layer: Matches with the route ‘/user/42’

The floor manager runs through a series of stations

- Is this dish allowed for this table? Stamps and calls next()

- Does this order have any raw ingredients? Maybe the customer has given their own special type of bread(POST). In this case it is a normal order(GET). Stamps and calls next()

- Show me your membership card (Auth). Stamps and calls next()

- Routing – This goes to Vegetarian station for customer ID 42.

The Database Query (Going Async) – The waiter clips the ticket to the kitchen window and walks away.

db.query() hits the node-postgres (pg) driver. The pg pool checks out an idle Client. If none is idle it is queued. The pool uses LIFO (last in first out). The driver constructs 5 binary messages

- Parse – “Prepare this sql”

- Bind – “Attach parameter ’42′”

- Describe – “Tell me the result format”

- Execute – “Run it”

- Sync – “End of message batch”

Everything is packed into one single buffer and returns a promise immediately. I have explained promises in another article here.

The waiter clips the order ticket #42 on the kitchen door, rings the bell and walks away. Once the food is ready the Kitchen will ring the bell. The waiter has a list of which orders are submitted. This is like the promises which are returned by the node.js

The Event Loop Keeps Spinning – The waiter serves other tables while the kitchen cooks.

Ensure that the Node.js process never does anything heavy synchronously. This is an anti-pattern. This is like the waiter gets into a long off topic discussion with a customer. He can continue to do light work like receiving orders from other tables, greeting new customers etc.

Database Response Arrives – The kitchen bell rings — order up!

PostgreSQL has been working, fetched the row. Back in Node, PostgresSQL socket fd is marked as readable in epoll’s ready list. epoll_wait() returns and libuv reads the bytes. The result is parsed and invokes the .then() . The client is returned to pool LIFO. If other queries are queued, it starts immediately.

Meanwhile, the kitchen bell rings. The chef slides a plate from the kitchen and clips a token “Order 42!” . The waiter finishes whatever he is doing and comes to grab the dish and deliver to the table.

Async Function Resumes – The waiter picks up the dish and remembers exactly where they left off.

V8 resumes the promise and gets the resolved value. Execution continues to the res.json(user)

The waiter collects the tray from kitchen straight to Table 42 and delivers the dish.

Cleanup – Another Round or Check Please?

The response is flushed. Node fires the ‘finish’ event on the ServerResponse, signaling all data has been handed to OS for sending. The TCP connection is kept alive usually for next 5 seconds so that the client can send another request maybe GET /user/43

This is like the customer can order a dessert or something else without going through the whole process. Connection closing is like the customer paying the bill and walking out.